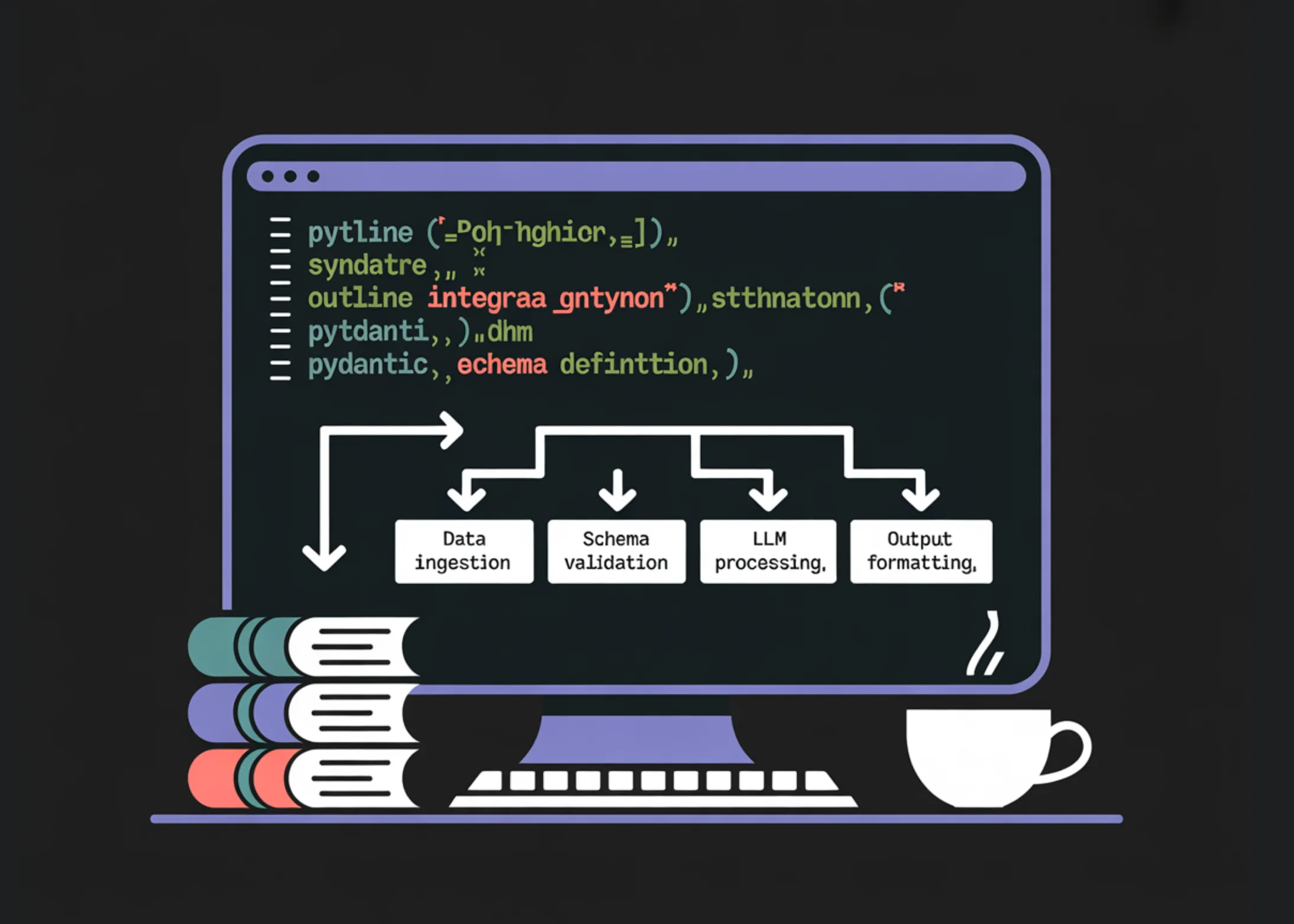

How to Build Type-Safe, Schema-Constrained, and Function-Driven LLM Pipelines Using Outlines and Pydantic

As large language models (LLMs) become increasingly powerful, it’s also important to have methods for effectively utilizing them and obtaining reliable results. Particularly, due to the unstructured nature of text generation models, there is a high probability of unpredictable results, making it essential to control and structure them. To solve these problems,OutlinesandPydanticare two powerful tools that we will use to buildLLMpipelines. In this tutorial, we will introduce how to use these two tools together to build a type-safe and schema-constrained LLM pipeline with step-by-step guidelines.

Outlinesis a framework for generating structured output from LLMs, andPydanticis a library for data validation and serialization. Combining these two canLLMto produce predictable and reliable results. We think this will be useful information for both data scientists and developers. Let’s get started right away?

1. Setting up the Development Environment and Basic Libraries

First,Outlines,TransformersacceleratefaststringpiecePydanticetc. essential dependencies must be installed. You can do this using the code below. This code is the process of installing the necessary libraries in the Python environment. You can activate CUDA for GPU acceleration or run the model using the CPU.import os, sys, subprocess, json, textwrap, re

subprocess.check_call([

sys.executable, “-m”, “pip”, “install”, “-q”,

“outlines”, “transformers”, “accelerate”, “sentencepiece”, “pydantic”

])

import torch

import outlines

from transformers import AutoTokenizer, AutoModelForCausalLM

from typing import Literal, List, Union, Annotated

from pydantic import BaseModel, Field

from enum import EnumThis code also checks the PyTorch version, checks CUDA availability, and displays the

Outlinesversion. This information helps ensure that the system has the appropriate environment for processingOutlinesandLLMs. The next step is to initialize theOutlinespipeline and build a simple helper function.2. Type-Specified Output: Literal, int, boolNow, let’s look at how to generate type-specified output from

LLM

usingOutliness. For example, you can instruct theLLMto perform sentiment analysis and return only one label (Positive, Negative, Neutral) from the emotion. The following code shows how to generate these type-specified outputs.LLMis forced to generate only specific types of output byOutliness.def extract_json_object(s: str) -> str:

s = s.strip()

start = s.find(“{“)

if start == -1:

return s

depth = 0

in_str = False

esc = False

for i in range(start, len(s)):

ch = s[i]

if in_str:

if esc:

esc = False

elif ch == “”:

esc = True

elif ch == ‘”‘:

in_str = False

else:

if ch == ‘”‘:

in_str = True

elif ch == “{“:

depth += 1

elif ch == “}”:

depth -= 1

if depth == 0:

return s[start:i + 1]

return s[start:]

def json_repair_minimal(bad: str) -> str:

bad = bad.strip()

last = bad.rfind(“}”)

if last != -1:

return bad[:last + 1]

return bad

def safe_validate(model_cls, raw_text: str):

raw = extract_json_object(raw_text)

try:

return model_cls.model_validate_json(raw)

except Exception:

raw2 = json_repair_minimal(raw)

return model_cls.model_validate_json(raw2)This code snippet defines utility functions used to recover invalid JSON from

Outlinesand safely validate the output returned by theLLMs. It also provides an example of generating integers and booleans. These types of outputs are important for ensuring that the data adheres to the expected format.3. Using Prompt TemplatesNext, let’s look at how to generate more structured prompts using the template function ofOutliness.

Outlines

templates allow you to dynamically insert user input into prompts while maintaining role format and output constraints. This helps improve reusability and ensure consistent responses. Templates make it easier to control the behavior of theLLMs.4. Structured Output with Pydantic (Advanced Constraints)Now, let’s look at how to define more complex constraints usingPydanticand control the structure of the output generated by the

LLM

s. For example, you can define a service ticketPydanticmodel that includes various information such as ticket priority, category, and details. You can instruct theLLMto generate a JSON object that adheres to this model. This method helps to make theLLMs output more structured and predictable.5. Function Calling Style (Schema -> Arguments -> Call)Finally, let’s look at how to safely execute Python functions using theLLMthrough the function calling style. First, you instruct the

LLM

to generate a list of arguments to be used as input for the function. Then, these arguments are validated and the Python function is called. This approach is very useful for performing complex calculations using the output of theLLMs. This makes theLLMs more powerful and flexible. With this approach, you can extend the functionality of theLLMs and make them available for various applications.ConclusionIn conclusion, in this tutorial, we have looked at how to buildLLMpipelines usingOutlinesand

Pydantic

s. These tools allow you to control and structure the output of theLLMs more effectively, leading to more reliable and predictable results. By following these guidelines, you will gain the tools and knowledge needed to buildLLM-based applications.Deep Dive and ImplicationsArrayOriginal Source:How to Build Type-Safe, Schema-Constrained, and Function-Driven LLM Pipelines Using Outlines and Pydantic💡 Articles you might like to see togethergstack: Open-source workflow system for Claude code

Getting Started Guide for Building Autonomous AI Agents: Utilizing MaxClaw

Google DeepMind releases Aletheia: Autonomous AI agent for mathematics research

한국어

한국어  English

English