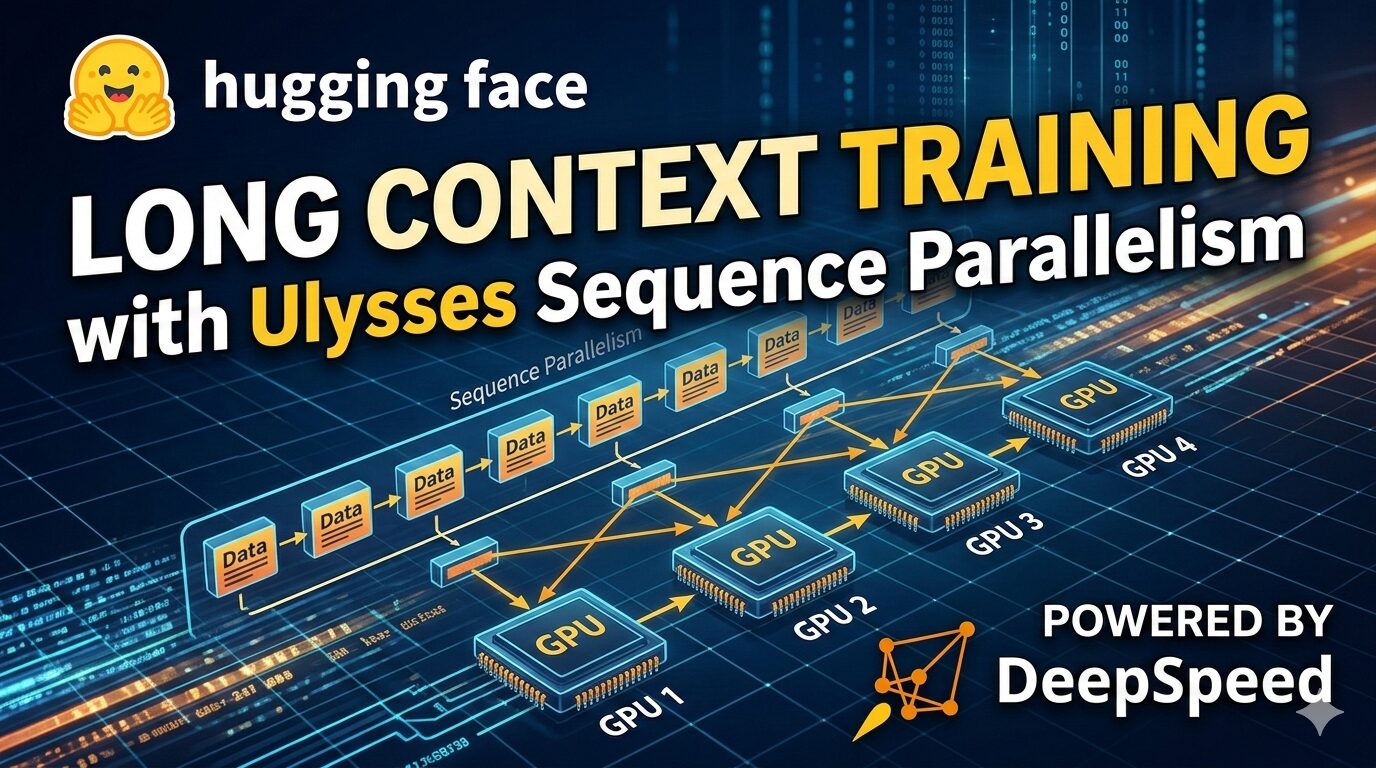

Ulysses Sequence Parallelism: Training with Million-Token Contexts

Recently, leveraging sequence parallelism has become crucial for improving the performance of large language models through training. Many tasks, such as document analysis, code understanding, complex reasoning, and RAG (Retrieval-Augmented Generation) workloads, require processing long sequences ranging from tens of thousands to millions of tokens. The token count of an average book is approximately 250,000, so handling inputs of book-length or multiple-document contexts necessitates dealing with sequences beyond the memory capacity of a single GPU.

However, training with such long contexts poses significant memory challenges. Transformer attention mechanisms scale quadratically with sequence length, meaning that beyond tens of thousands of tokens, the context length can easily exceed a single GPU’s memory capacity. Ulysses sequence parallelism (part of Snowflake AI Research’s Arctic Long Sequence Training (ALST) protocol) offers an effective solution by distributing the attention computation across multiple GPUs.

The Challenge of Long Sequence Training

The attention mechanism in transformers scales quadratically with sequence length. For a sequence of length n, standard attention requires O(n2) FLOPs and O(n2) memory to compute and store the attention score matrix. Optimized implementations like FlashAttention reduce this to O(n) memory through tiling operations, but the O(n2) operation still remains. Therefore, for very long sequences (32k+ tokens), even with FlashAttention, single GPU memory limitations can be exceeded.

Scenarios where long-context training is essential include:

- Document Understanding: Processing entire books, legal documents, or research papers.

- Code Analysis: Understanding large codebases containing multiple interconnected files.

- Reasoning Tasks: Models that “think” step-by-step can generate thousands of tokens during reasoning.

- Retrieval-Augmented Generation: Integrating multiple retrieved passages into the context.

Traditional data parallelism does not help solve these problems, as each GPU still needs to process the entire sequence within an attention block. Therefore, a method is needed to distribute the sequence itself across multiple devices.

How Ulysses Works

Ulysses sequence parallelism (introduced in the DeepSpeed Ulysses paper) takes a clever approach. In addition to splitting the sequence dimension, it also splits the attention heads across GPUs. This leverages the fact that attention heads can be computed independently, enabling efficient parallelization with low communication overhead.

Ulysses partitions the input sequence and uses all-to-all communication to exchange key-value pairs, allowing each GPU to compute a subset of the attention heads.

The specific operation is as follows:

- Sequence Sharding: The input sequence is partitioned along the sequence dimension across P GPUs. Each GPU i holds tokens [i⋅n/P,(i+1)⋅n/P).

- QKV Projection: Each GPU computes query, key, and value projections for the local sequence chunk.

- All-to-All Communication: An all-to-all collective operation redistributes data so that each GPU holds data for all attention heads over all sequence positions.

- Local Attention: Each GPU uses the standard attention mechanism (FlashAttention or SDPA) to compute attention for the assigned heads.

- All-to-All Communication: Another all-to-all operation restores the redistribution, returning to the sequence sharded format.

- Output Projection: Each GPU computes the output projection for the local sequence chunk.

Communication Complexity

Ulysses requires two all-to-all operations per attention layer, with a total communication volume of O(n⋅d/P) per GPU, where n is the sequence length, d is the hidden dimension, and P is the degree of parallelism. Ring Attention communicates O(n⋅d) via sequential point-to-point transmissions reaching P−1. Ulysses provides lower latency because all-to-all can utilize the full bandwidth of a single collective stage, whereas Ring Attention is serialized via P−1 hops.

Integration with Accelerate

Accelerate provides a foundation for Ulysses sequence parallelism through the ParallelismConfig class and DeepSpeed integration, enabling efficient training across various hardware environments.

Best Practices

To get the most out of Ulysses sequence parallelism, several best practices should be followed. Firstly, the sequence length should be divisible by the sp_size. Secondly, FlashAttention should be used to accelerate the attention operation. Thirdly, combining with DeepSpeed ZeRO can further reduce memory usage. Fourthly, a suitable allocator for PyTorch memory fragmentation should be used. Fifthly, 2D parallelism configuration should be tuned to optimize GPU utilization. Sixthly, Liger-Kernel can be leveraged to improve performance. Finally, consider how to distribute tokens across ranks to enhance efficiency.

By following these best practices, you can effectively train with very long sequences using Ulysses sequence parallelism and improve the performance of large language models.

In-depth Analysis and Implications

Array

Original Source: Ulysses Sequence Parallelism: Training with Million-Token Contexts

English

English  한국어

한국어 日本語

日本語