Precision Regression: Quantifying Production Fragility Caused by Excessive Features

Recently, the complexity of artificial intelligence models has increased, and an approach of adding more features simply to increase model performance has become widespread. However, it is important not to overlook the hidden structural risks that can arise, even though it may seem to have only positive effects. The intuition that models can make better predictions if they learn more information often clashes with reality and causes unexpected problems.

This article critically analyzes how adding excessive features in precision regression models can undermine model reliability, discusses the reasons and solutions in depth, and emphasizes that blindly adding features to increase accuracy can compromise model stability and increase production fragility, illustrating the risk with real-world examples. It will also explain why removing excessive features and making models more concise is important and the benefits that can be obtained. It presents essential considerations for securing model stability and reliability, along with the importance of <feature> engineering.

Hidden Risks of Adding Features: Structural Fragility

Adding features not only increases model complexity but also increases dependence on various elements, such as the upstream data pipeline, external systems, and data quality checks. Even small changes, such as missing fields, schema changes, or delayed datasets, can degrade prediction accuracy. This structural fragility makes model maintenance and management more difficult and can reduce the reliability of prediction results.

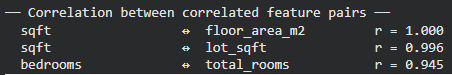

Coefficient Instability and Meaningless Influence Dispersion

Adding features indiscriminately causes not only increased computational costs and system complexity but also the problem of coefficient instability caused by feature correlations. In particular, features with high correlations or low information content can make it difficult for the model to distribute influence appropriately during the optimization process, causing coefficients to vary unpredictably. This increases model complexity, impairs interpretability, and is a major cause of inconsistent prediction results. Variables with weak signals may be recognized as important despite the fact that they are likely to be noise representing meaningless patterns. Ultimately, this process creates models that appear sophisticated on paper but produce inconsistent predictions in reality.

Production Fragility and Maintenance Difficulties

Excessive <features> increase the model’s production fragility. Each time new data is introduced, the model must adjust itself to the existing data. The more unnecessary features there are, the more variables the model must consider, which compromises model stability and reduces the consistency of prediction results. Unnecessary features also make model maintenance difficult. If the understanding of each feature is low, it can be difficult to understand the model’s behavior, making it difficult to identify and correct errors. Removing <features> can contribute to improving model performance and increasing productivity.

Case Study: Real Estate Price Prediction Model

This article uses a real estate price prediction model to specifically demonstrate the impact of excessive features on model reliability. It compares and analyzes models with a large number of features versus those using only a few core features to simulate how excessive <features> compromise model stability.

Conclusion: Balancing Conciseness and Stability

Blindly adding <features> to increase model accuracy can undermine model reliability and increase production fragility. When developing a model, it is important to consider not only accuracy but also various factors such as stability, maintainability, and interpretability. Removing unnecessary features and making models more concise is an essential process for improving model performance and increasing the reliability of prediction results.

In conclusion, when building precision regression models, it is important to recognize the importance of <feature> engineering and to continuously strive to secure model stability. Excessive features increase model complexity, reduce the consistency of prediction results, and make maintenance difficult. Maintaining a balance between conciseness and stability is key to successful model development.

In-Depth Analysis and Implications

Array

Original source: Beyond Accuracy: Quantifying the Production Fragility Caused by Excessive, Redundant, and Low-Signal Features in Regression

💡 Related Articles

- Next-generation agent AI construction: Adding memory tools and verification functions to runtime agents based on cognitive blueprints

- Yann LeCun’s New AI Paper: AGI is Misdefined and Introduces Superhuman Adaptable Intelligence (SAI)

- Applying Robot AI to Embedded Platforms: Dataset Recording, VLA Fine-tuning, and On-device Optimization

English

English  한국어

한국어 日本語

日本語