New Hyperagents: AI Rewrites Learning Rules, Ushering in a New Era

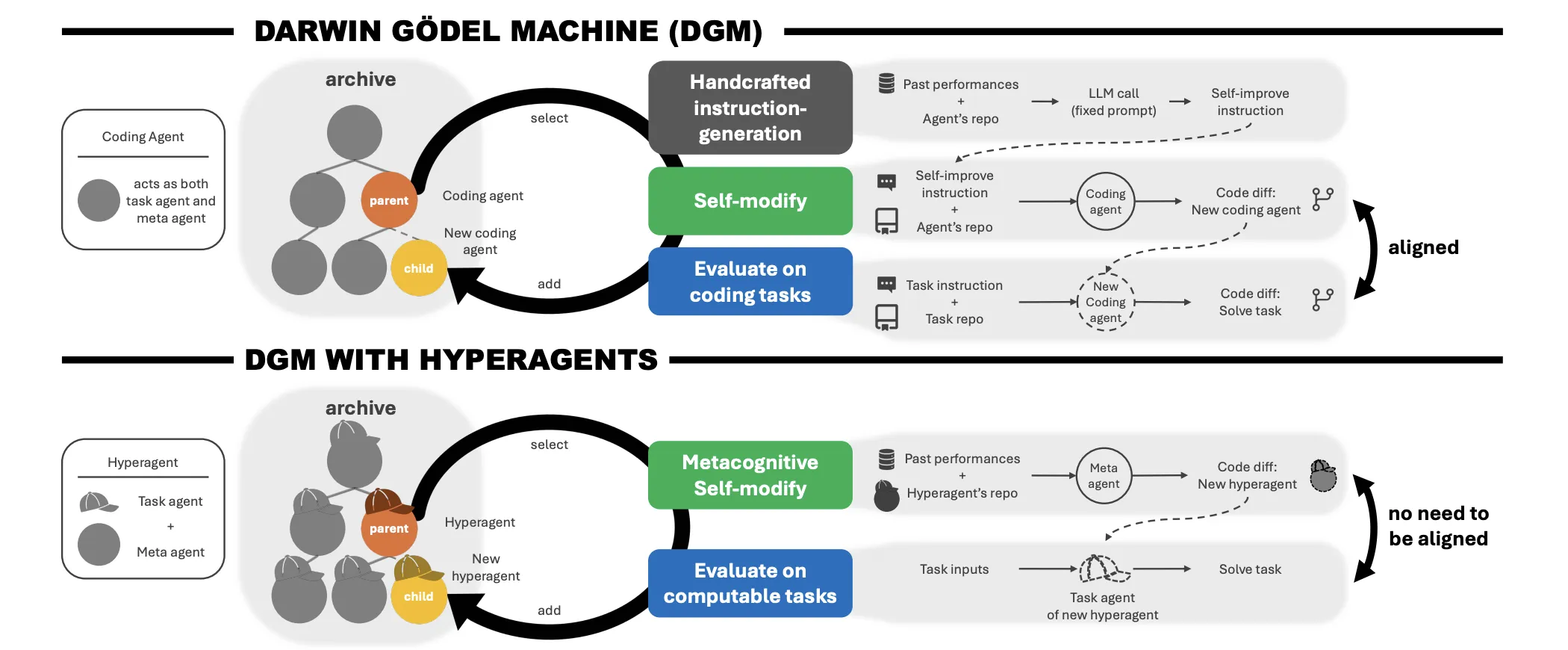

In the field of artificial intelligence (AI), the long-held dream of ‘recursive self-improvement,’ where AI systems evolve not just to perform specific tasks better, but to learn how to learn better, has been considered a somewhat fantastical goal. It’s been likened to finding ‘Tutankhamun’s golden mask.’ Theoretical models existed, but implementing them in real-world environments proved challenging. Recent advancements with the Darwin Gödel Machine (DGM) have begun to change this. The DGM demonstrated the possibility of self-improvement in coding, bringing this seemingly impossible goal a step closer. However, the DGM still faced challenges that needed to be addressed.

The DGM had a limitation: it relied on meta-level mechanisms designed by humans. This effectively capped the system’s growth potential to the abilities of the human designer. To address this, researchers at the University of British Columbia, Vector Institute, University of Edinburgh, New York University, Canada CIFAR AI Chair, FAIR at Meta, and Meta Superintelligence Labs proposed an innovative framework called Hyperagents. This framework makes the meta-level modification procedures themselves editable, decoupling task performance from self-modification capabilities. This allows AI to evolve more freely.

1. The Meta-Level Infinite Regression Problem: A Challenge to Overcome

One of the biggest challenges faced by previous self-improvement systems is the phenomenon of ‘infinite regression.’ It’s like the Ptolemaic effect, where solving one problem creates another. If both a task agent (the component that solves the problem) and a meta-agent (the component that improves the task agent) exist, eventually a ‘meta-meta’ agent would be needed. Repeatedly pushing the problem up the chain doesn’t provide a fundamental solution; it merely postpones it. This infinite regression has been a significant barrier to AI research. Hyperagents represent a crucial advancement in addressing this problem.

2. Hyperagents: Integration into a Single, Editable Program

The DGM-Hyperagent (DGM-H) framework addresses this by integrating the task agent and meta-agent into a single, self-referential, and fully editable program. Here, an agent is defined as a computable program that can invoke Foundation Models (FM) and external tools. It’s analogous to merging various components into a single system using Lego blocks, enhancing flexibility and scalability. Hyperagents have dramatically advanced AI’s self-improvement capabilities through this integration.

3. Performance Improvements Across Diverse Fields: Coding, Paper Review, Robot Control

The research team tested DGM-H across various fields, including coding, paper review, robot reward design, and Olympiad-level mathematics problem solving. Notably, in the robot reward design field, the hyperagent demonstrated significant performance improvements, from an initial score of 0.060 to 0.372 (CI: 0.355–0.436). This reflects the discovery of more efficient strategies, beyond simply climbing to a local optimum. In the paper review field, it recorded a performance improvement from 0.0 to 0.710 (CI: 0.590–0.750), significantly outperforming existing static benchmarks.

4. Transfer of Learning Capabilities: Presenting New Possibilities

The research team discovered a crucial fact: meta-level improvements are general and transferable. This is akin to learning various languages; knowledge acquired in one domain can be applied to another. The hyperagent transferred meta-agents optimized for paper review and robot tasks to Olympiad-level mathematics problem-solving. The human-designed DGM run’s meta-agent failed to improve in the new environment (imp@50 = 0.0), but the transferred DGM-H hyperagent achieved an imp@50 of 0.630. This demonstrates that the system autonomously acquires transferable self-improvement strategies.

5. AI Evolution: Building Infrastructure for Itself

Remarkably, the hyperagent autonomously developed sophisticated engineering tools to support its own growth, without explicit instructions. This includes generating classes for performance tracking, implementing memory for storing continuous insights and causal hypotheses, and computing-based planning to adjust modification strategies based on the remaining experiment budget. This demonstrates that AI is not merely solving given problems but building a foundation for its own growth and evolution. This autonomous infrastructure-building capability will present new possibilities for AI research going forward.

Future Outlook: The Era of AI Self-Improvement

The research results of DGM-H are expected to have a significant impact on the field of AI research. The emergence of hyperagents has enabled AI to evolve in a more flexible and adaptable direction. In the future, AI is expected to evolve beyond being merely a tool to become a partner that learns, evolves, and collaborates with humans.

Of course, it’s necessary to consider the ethical and social problems that these technologies may bring. Questions such as whether the AI’s self-improvement capabilities could become uncontrollable and how the development of AI will affect human employment need in-depth discussion. However, it’s undeniable that the emergence of hyperagents marks a new chapter in AI research.

In-Depth Analysis and Implications

Array

Meta AI’s New Hyperagents Don’t Just Solve Tasks—They Rewrite the Rules of How They Learn

English

English  한국어

한국어