AI Development Acceleration: Bridging the Gap Between Past and Present

Artificial intelligence (AI) development is progressing at an unprecedented rate. Just a few years ago, training AI models required immense time and resources. Implementing complex algorithms, collecting vast amounts of data, and learning models based on them were significant burdens for both companies and individual developers. But what about now? Thanks to improvements in hardware performance, software optimization, and better dataset utilization, training times have been drastically reduced.

For example, training tasks that used to take weeks can now be completed in a matter of hours. This change is driving the popularization of AI technology and creating an environment where more people can participate in AI development. This is particularly good news for individual researchers and small businesses. Now, everyone has the opportunity to train and experiment with powerful AI models.

Nanochat: Opening New Horizons for GPT-2 Model Training

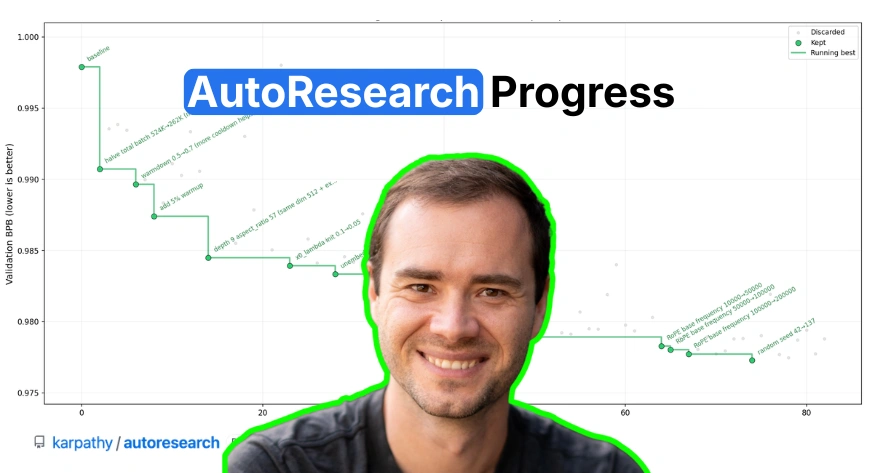

Recent updates from AI researcher Andrej Karpathy have made this change even more apparent. The Nanochat open-source project has revealed that it can train a model at the GPT-2 level in just 2 hours using 8× NVIDIA H100 GPUs on a single node. This news has caused a significant ripple effect in the AI industry and has presented new possibilities for GPT-2 model training.

The core of the Nanochat project lies in building an efficient training pipeline. By eliminating unnecessary steps, maximizing parallel processing, and optimizing memory usage, it has made various technical efforts. Furthermore, it has leveraged the latest hardware, such as NVIDIA H100 GPUs, to further improve training speed. Thanks to these efforts, Nanochat can train high-quality GPT-2 models in a much shorter time than before.

2-Hour Training? The Significance and Limitations of Nanochat

Of course, a short 2-hour training time is an amazing result, but there are a few things to consider. First, it requires high-performance hardware such as NVIDIA H100 GPUs. It may be difficult to achieve the same results in a typical environment. Second, the GPT-2 model itself is quite large, and the training dataset is also vast. Therefore, training times may be shorter when using smaller models or datasets.

However, even considering these limitations, the significance of the Nanochat project is immense. The fact that the GPT-2 model can be trained in just 2 hours greatly expands the possibilities of AI model training and provides opportunities for more people to participate in AI development. Furthermore, the technical know-how of Nanochat can be applied to other AI projects and contribute to overall AI technology advancement. Research on lightweighting and efficient training of GPT-2 models is expected to continue in the future.

What Impact Will AI Advancement Have on the Industry? And What About the Future?

The drastic reduction in GPT-2 model training time through the Nanochat project is expected to accelerate the pace of advancement in the AI industry. Model development cycles will be shortened, and new ideas can be quickly experimented with, leading to accelerated innovation in AI technology. Moreover, as large language models (LLMs) like the GPT-2 model become more accessible, innovative services utilizing LLMs are expected to emerge in various fields.

For example, innovative services utilizing LLMs may emerge in various fields such as chatbots, translation, content creation, and search engines. Furthermore, LLMs can be used in various fields such as personalized education, medical diagnosis, and financial analysis. As the efficiency of GPT-2 model training increases, these possibilities will become more realistic. AI technology is expected to deeply penetrate various aspects of our lives and lead to innovation in the future.

In-Depth Analysis and Implications

- NVIDIA H100 GPU Utilization: Significantly improved training speed by leveraging high-performance GPUs.

- Efficient Training Pipeline Construction: Increased training efficiency by eliminating unnecessary steps and maximizing parallel processing.

- Dataset Optimization: Improved model performance by utilizing a high-quality dataset.

- Model Lightweighting Technology: Reduced the size of the GPT-2 model to shorten training time and reduce resource usage.

- Distributed Training Technology: Shortened overall training time by using multiple nodes to train the model in a distributed manner.

Original Source: Nanochat Can Now Train a GPT-2 Level Model in Just 2 Hours

English

English  한국어

한국어 日本語

日本語