ML Model Deployment, Worry-Free Now! 4 Safe Strategies Revealed!

Developing an ML model is like baking a delicious cake. You follow the recipe, mix the ingredients, and bake it in the oven – finally, a perfect cake is born. But before you offer this cake to the world, you need to taste it and ensure its safety. The same applies to ML models. Even a model that appears to have excellent performance needs to be verified in a real environment. Otherwise, it can damage the user experience or cause unexpected problems. That’s where ML Model Deployment strategies shine!

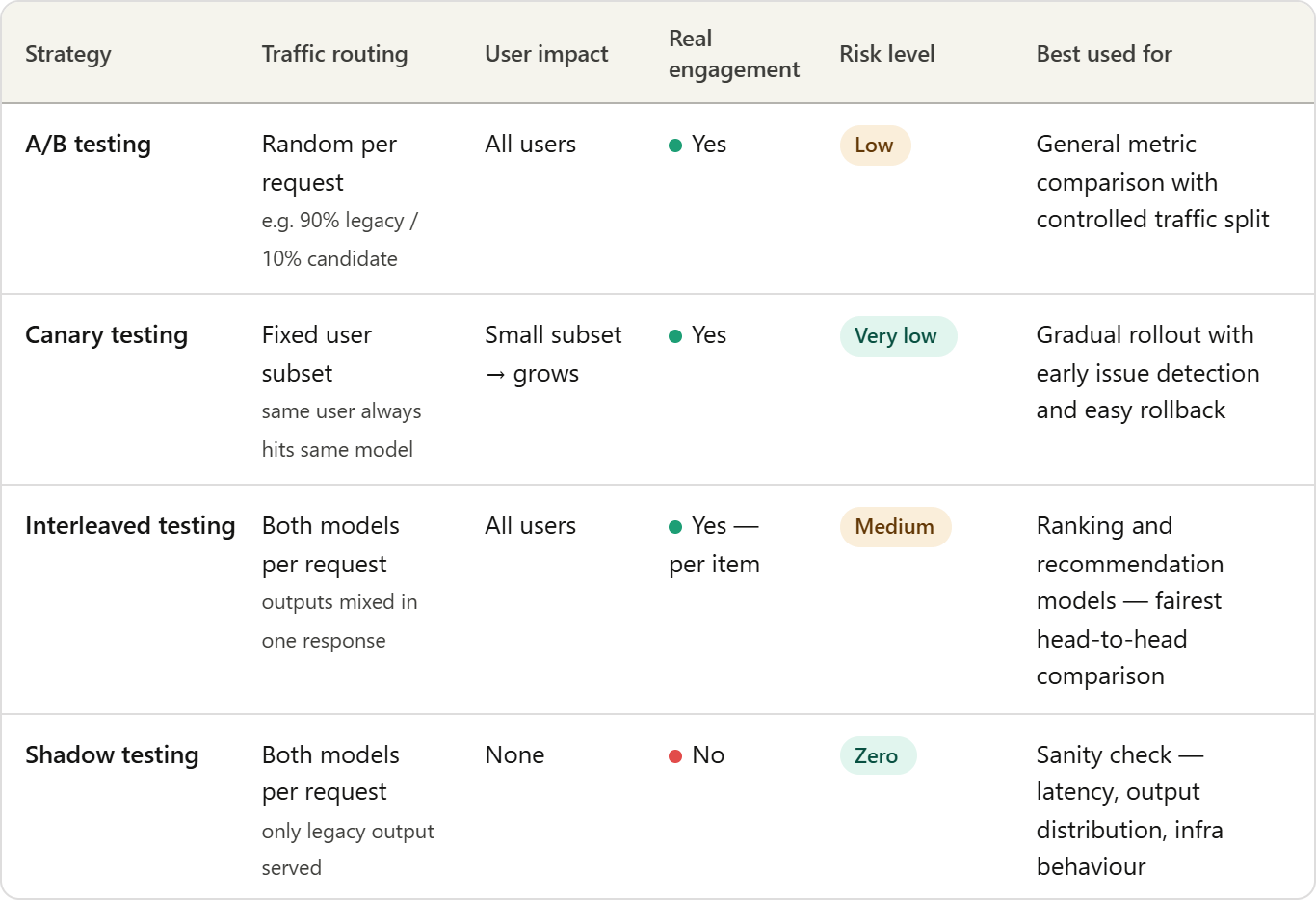

Many ML engineers struggle when deploying new models. It’s like a first date – tense and worrisome, with a fear of what might go wrong. But don’t worry! Today, we’ll introduce 4 safe strategies to help you navigate your first date successfully: A/B Testing, Canary Testing, Interleaved Testing, and Shadow Testing! These strategies will help you manage the ML Model Deployment process more safely and efficiently.

1. A/B Testing: A Cautious Taste Test

A/B testing is one of the most common methods, similar to testing a new recipe, comparing the existing model (control) and the new model (variation). Traffic is divided into two groups, providing existing models to one group and new models to another. It’s like comparing two different cake flavors to see which is more popular. You can minimize risk by providing 90% of users with the existing model and only 10% with the new model. You can compare various metrics, such as click-through rates, conversion rates, engagement, and sales, to confirm whether the new model actually delivers improved results. A/B testing is the first step in ML Model Deployment, a process of cautious tasting.

2. Canary Testing: A Canary’s Warning Signal

Canary testing is like miners bringing canaries into coal mines, a method to detect risks by first applying new models to a small number of users. Like a canary reacting to poisonous gas and warning miners, Canary testing serves the role of verifying how new models work in a real environment. Instead of applying new models to all users at once, apply them to a specific user group and gradually increase the scope of application as performance metrics are good. If a problem occurs, you can immediately roll back the model to minimize damage. Canary testing acts as a safety net in the ML Model Deployment process, helping to detect unexpected risks in advance.

3. Interleaved Testing: Models Competing Together

Interleaved testing is like two chefs making different dishes with the same ingredients, a method of providing users with a mixture of results from multiple models. When a request is received, both the existing model and the new model perform predictions, and the two results are combined to provide to the user. In the case of recommendation systems, some items can be generated by the existing model and other items by the new model. Record user reactions (click-through rates, viewing times, feedback, etc.) to compare the performance of each model. Interleaved testing helps evaluate ML Model Deployment while minimizing bias caused by differences in user groups or traffic distribution.

4. Shadow Testing: Experimentation in the Shadows

Shadow testing is a method of running new models in a real production environment without affecting users, like a shadow. The new model receives the same requests as the existing model, but the prediction results are not provided to users and are logged. This allows you to evaluate how the new model works with actual traffic and infrastructure environments. Shadow testing reduces the risk of ML Model Deployment without affecting users and allows you to predict actual environmental performance in advance. It’s like testing new technology in a hidden laboratory.

ML Model Deployment, Now with Confidence!

Deploying new ML models can be challenging, but by utilizing these 4 strategies, you can manage the process safely and efficiently. A/B testing, Canary testing, Interleaved testing, and Shadow testing will be your reliable allies for ML Model Deployment success. Now, go ahead and introduce your new ML models to the world with confidence! And remember, small changes can lead to big success!

Industry Impact and Future Outlook

These controlled rollout strategies contribute to increasing the stability and reliability of ML models and play an important role in improving user experience and enhancing business performance. In particular, as the complexity of ML Model Deployment increases, these strategies are becoming essential elements. They will be further linked to more advanced automated ML Model Deployment platforms in the future, enabling more efficient management and operation.

Furthermore, these strategies can be used not only to secure the safety of model deployment, but also to continuously improve the performance of models and add new features. For example, Canary testing can be used to measure the initial performance of a new model and repeat the process of identifying and correcting problems. These ongoing improvement efforts will improve the quality of ML models and ultimately contribute to creating business value. In the future, ML Model Deployment will play an even more important role and lead innovation in various industries.

In-depth Analysis and Implications

Array

English

English  한국어

한국어